A family of omnimodal world models designed to jointly process and generate language, vision, audio, and action sequences.

Tingle Li

I am a fourth-year CS Ph.D. student at UC Berkeley, advised by Gopala Anumanchipalli. I am a part of Berkeley Artificial Intelligence Research (BAIR) Lab, where I have been fortunate to collaborate with Andrew Owens.

Previously, I had the privilege to work with Hang Zhao from Tsinghua University and Ming Li from Duke University.

My research uses sound as a window into physical common sense, revealing how objects and scenes are composed, behave, and interact in ways vision and language alone may not capture. I am grateful to be supported by the Sony Research Award.

Publications

A benchmark for evaluating physically-grounded video-to-audio generation.

Learn sim-to-real robot policies with generative audio.

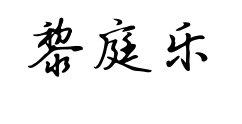

Interactively generate object-specific sounds from visual scenes.

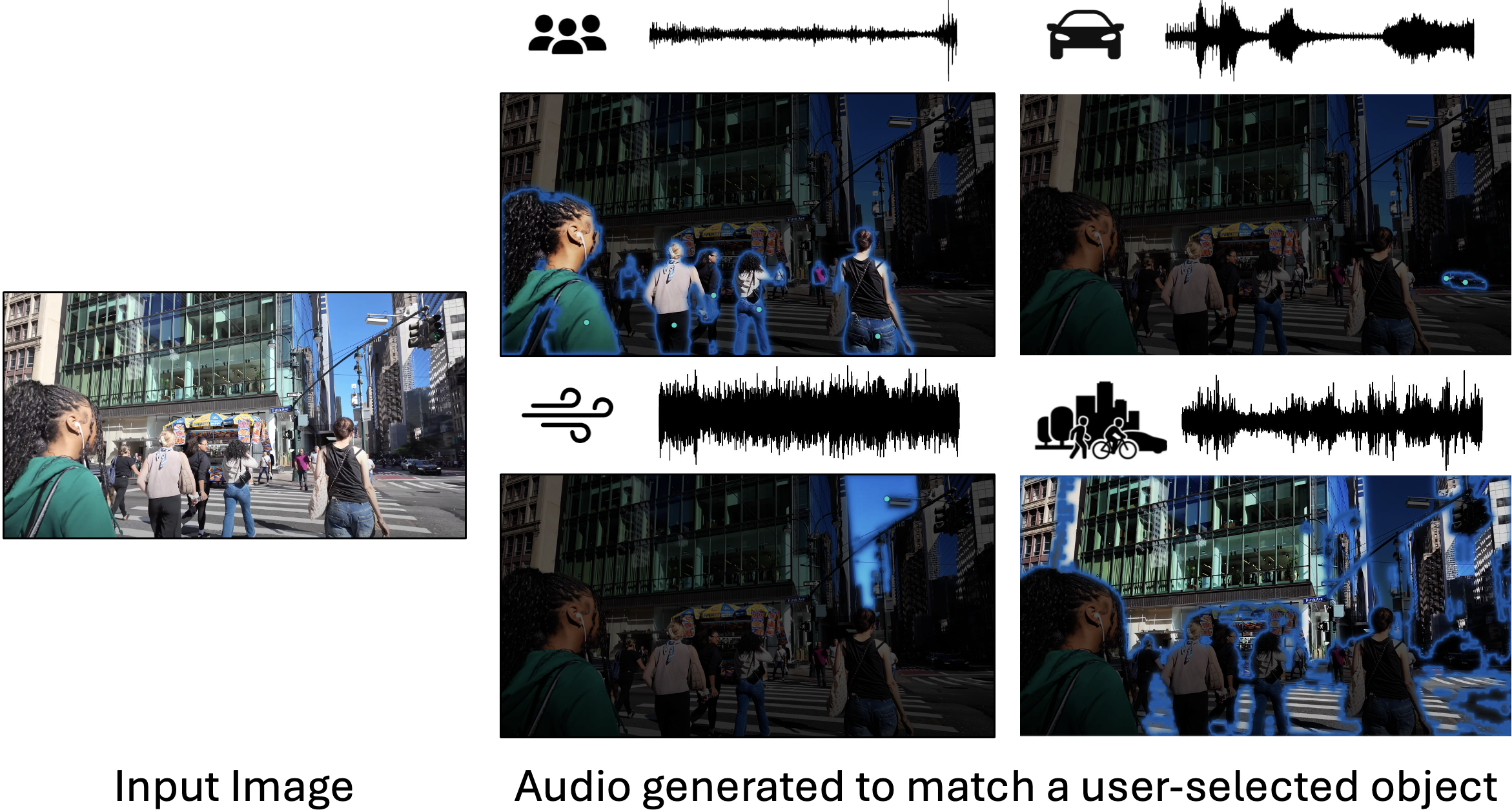

Manipulate audio texture using exemplar-based analogy.

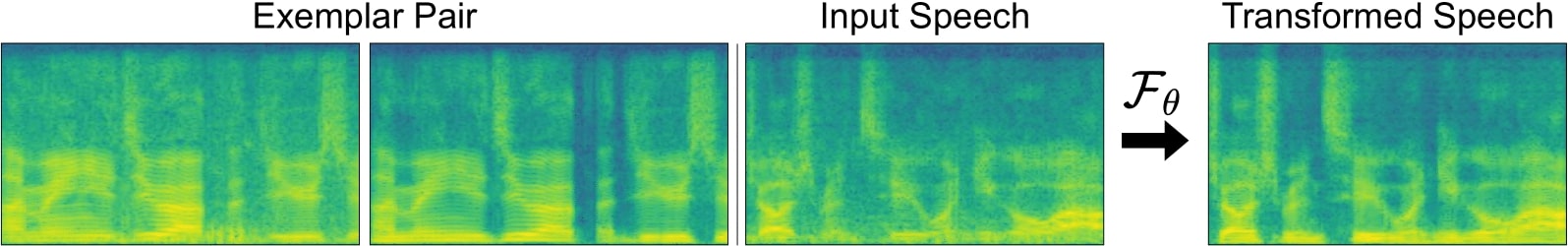

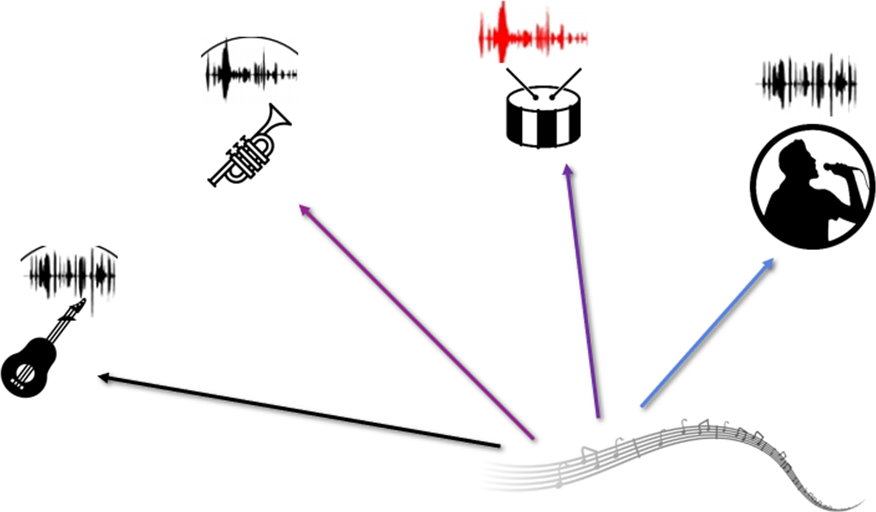

Restyle a sound to fit with another scene, using an audio-visual conditional example taken from that scene.

We synthesize speech from MRI videos.

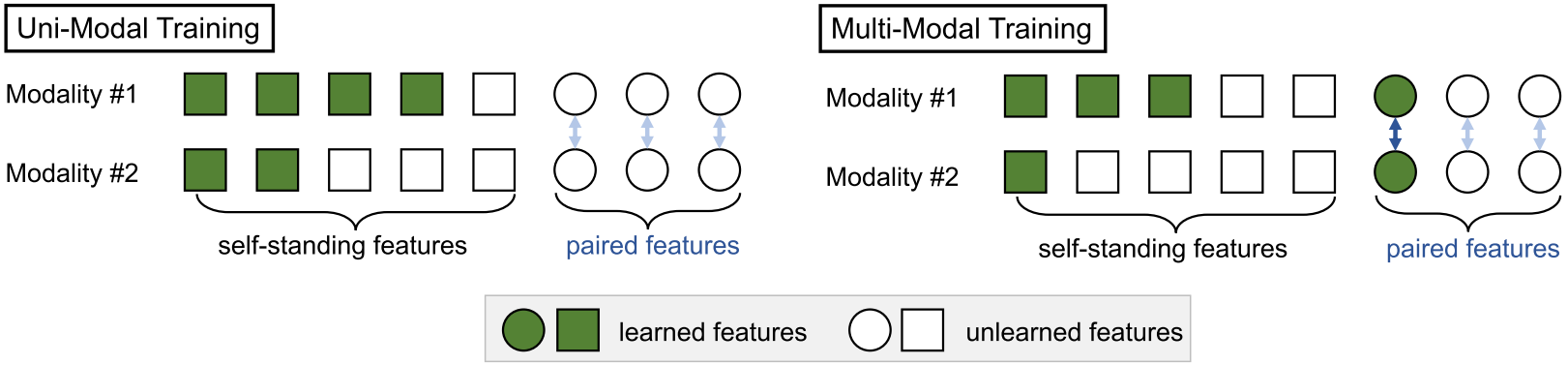

Improving generalization in multimodal learning through uni-modal feature analysis.

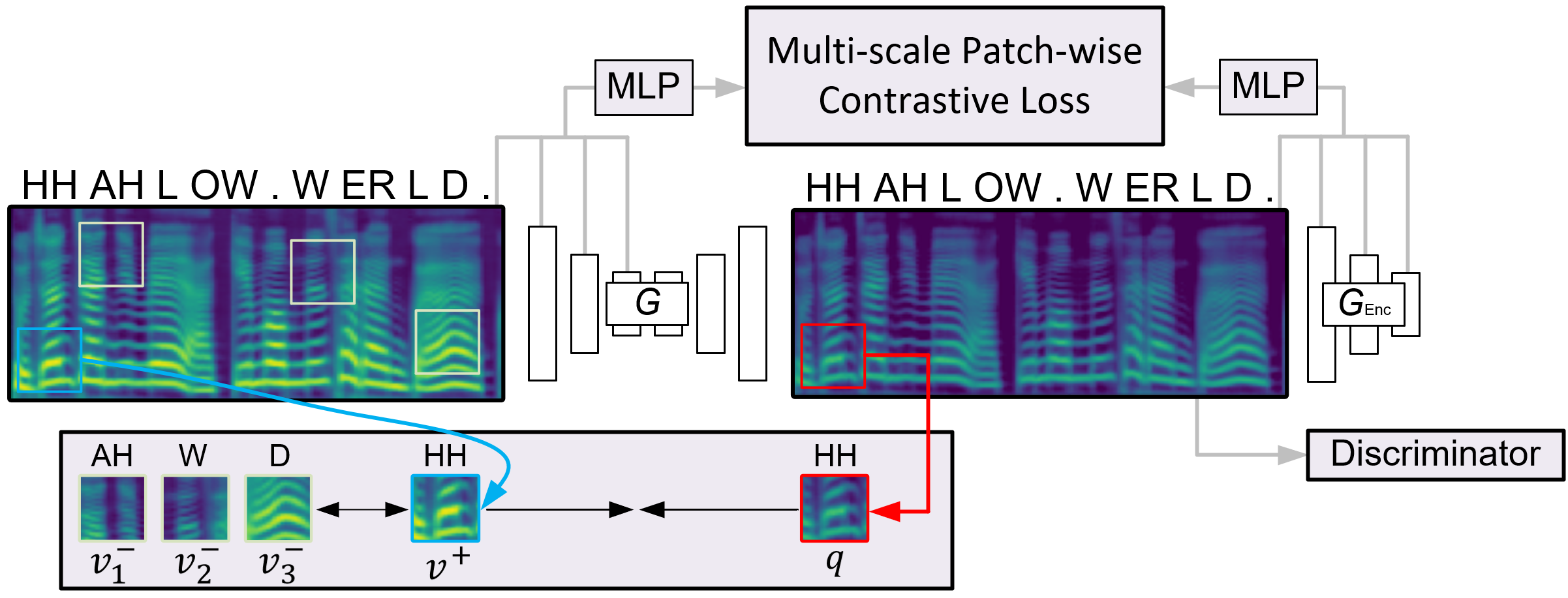

We learn from unlabeled data to manipulate the style of an image using sound.

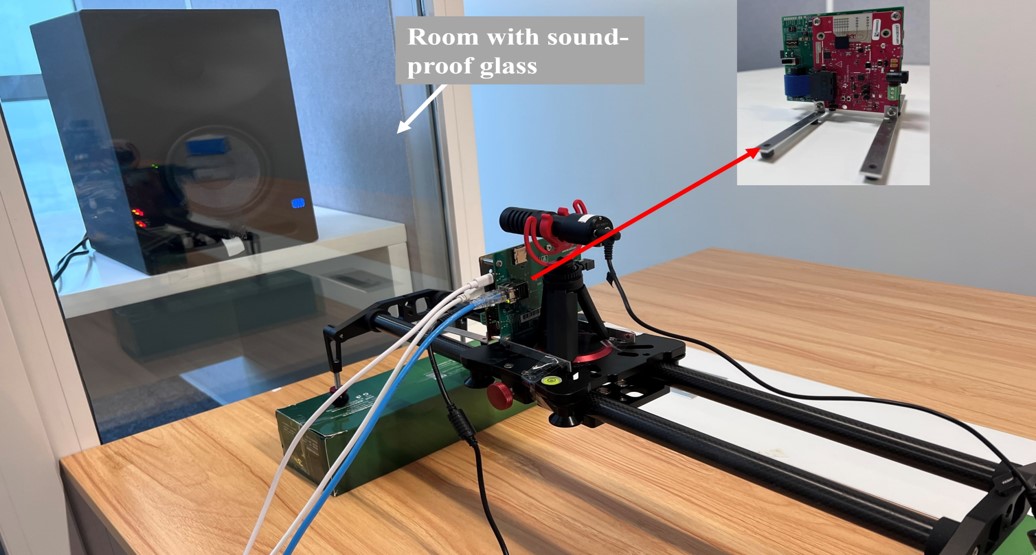

High-quality speech recovery system for millimeter-wave radar without deafness.

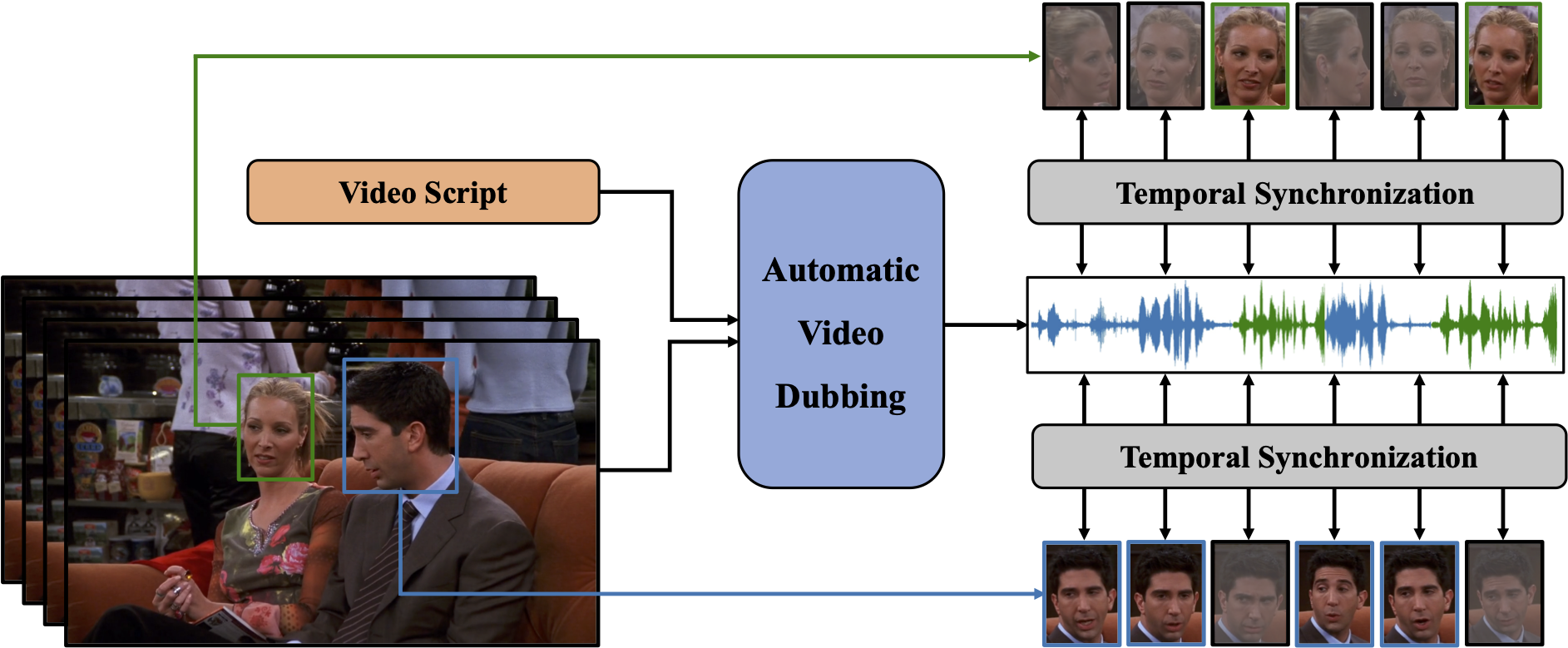

Automatic video dubbing driven by a neural network.

One-way GAN training for non-parallel voice conversion.

Sliced attention for music source separation by focusing on local intra-chunk features.

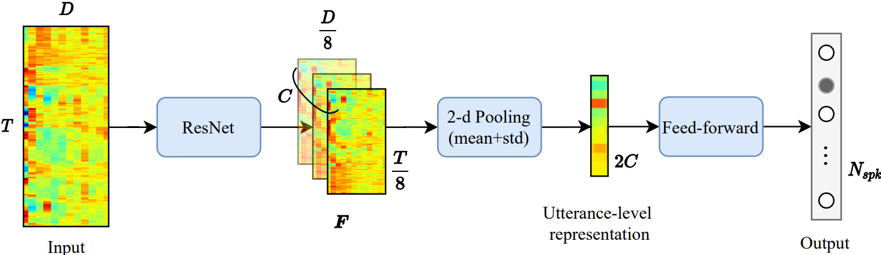

Winning systems for NASA Fearless Steps Challenge on speech activity detection and speaker identification.

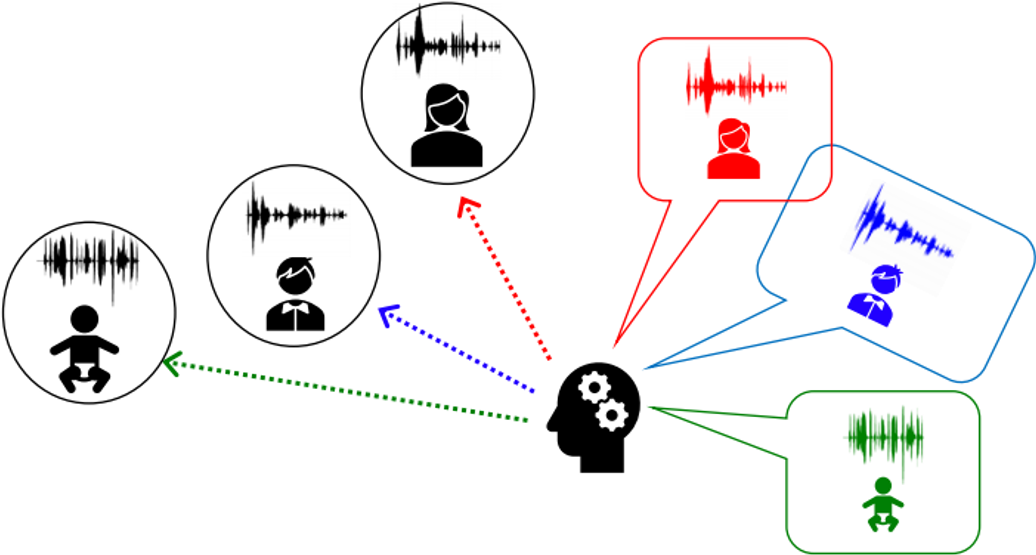

Attention-based target speaker separation with improved efficiency and generalization.

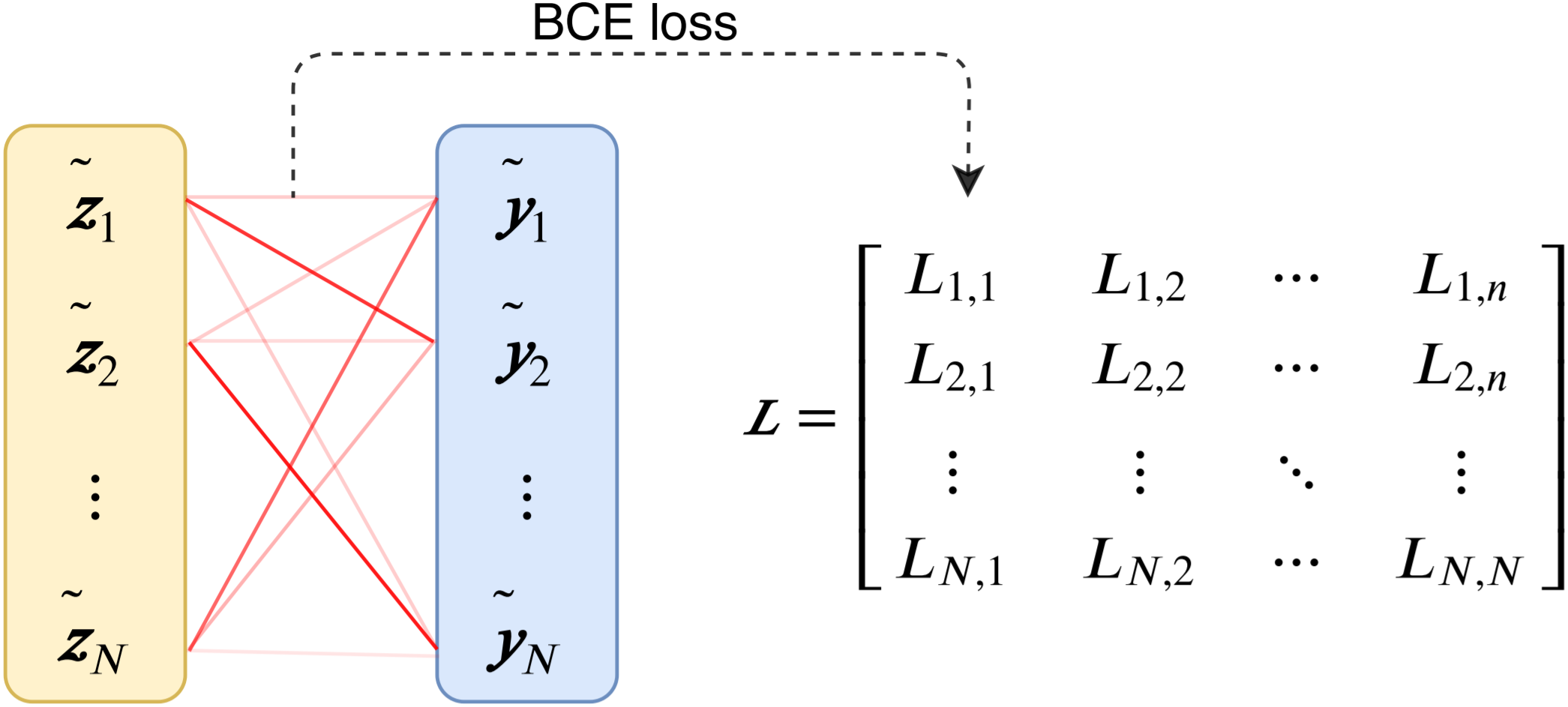

A Hungarian algorithm-based loss that speeds up end-to-end speaker diarization.