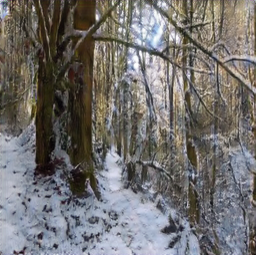

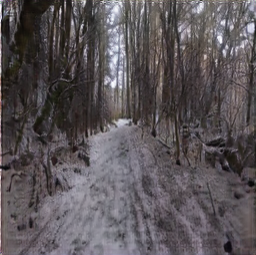

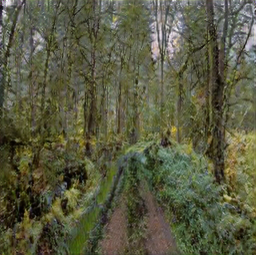

Audio-driven image stylization. We manipulate the style of an image to match a sound. After training with an unlabeled dataset of egocentric hiking videos, our model learns visual styles for a variety of ambient sounds, such as light and heavy rain, as well as physical interactions, such as footsteps.

Abstract

From the patter of rain to the crunch of snow, the sounds we hear often reveal the visual textures within a scene. In this paper, we present a method for learning visual styles from paired audio-visual data. Our model learns to manipulate the texture of a scene to match a sound, a problem we term audio-driven image stylization. Given a dataset of paired, unlabeled audio-visual data, the model learns to manipulate input images such that, after manipulation, they are more likely to co-occur with other input sounds. In quantitative and qualitative evaluations, our sound-based model outperforms label-based approaches. We also use audio as an intuitive embedding space for image manipulation, and show that manipulating audio, such as by adjusting its volume, results in predictable visual changes.

Results Video

Dataset

Click on video to unmute/mute. Use arrows to navigate.

Audio-driven Image Stylization Results

Restyling Video by Manipulating its Sound

Click on video to play/pause with synced audio.

Varying Sound Volume Results

Mixing Sound Results

Paper and Supplementary Material

Tingle Li, Yichen Liu, Andrew Owens, Hang Zhao

Learning Visual Styles from Audio-Visual Associations

ECCV 2022

@inproceedings{li2021learning,

title={Learning Visual Styles from Audio-Visual Associations},

author={Tingle Li, Yichen Liu, Andrew Owens, Hang Zhao},

booktitle={ECCV},

year={2022}

}Acknowledgements

This template was originally made by Phillip Isola and Richard Zhang for a colorful ECCV project; the code can be found here.